Amazon S3 is called a simple storage service, but it is not only simple, but also very powerful. It supports a lot of features that can be used in everyday work.

Mar 20, 2018 - At the top of the story, I accept that Amazon's API Documentation is prepared for aliens. For not suffering this persecution I wanted to prepare a.

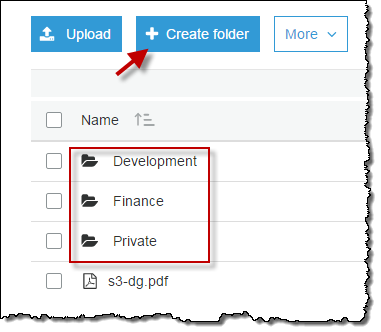

But of course, the main feature is the ability to store data by key. Storage Service. You can store any data by key in S3 and then you can access and read it. But to do it a bucket should be created at first. A bucket is similar a namespace in terms of C# language. One AWS account is limited by 100 buckets and all buckets names are shared through all of Amazon accounts.

So you must select a unique name for it. See more details at. A code that stores some data for some key in a bucket is the following: PutObjectRequest request = new PutObjectRequest; request.WithBucketName(BUCKETNAME); request.WithKey(S3KEY); request.WithContentBody( 'This is body of S3 object.' ); client.PutObject(request); Here S3 Object is created with a key defined in the constant S3KEY and a string is written into it. A content of a file can also be put into S3 (instead of a string). To do it the code should be modified a little: PutObjectRequest request = new PutObjectRequest; request.WithBucketName(BUCKETNAME); request.WithKey(S3KEY); request.WithFilePath(pathToFile); client.PutObject(request); To write a file the method WithFilePath should be used instead of the method WithContentBody. See more details about S3 objects at.

Now let's make sure that the data into S3 have really been written: After you see S3 Object in S3 browser in EC2Studio addin, double click at it and select a program to use to show its' content. The screenshot shows that S3Object has been created and its' content is opened in Notepad. To read S3 Object from C# code: GetObjectRequest request = new GetObjectRequest; request.WithBucketName(BUCKETNAME); request.WithKey(S3KEY); GetObjectResponse response = client.GetObject(request); StreamReader reader = new StreamReader(response.ResponseStream); string content = reader.ReadToEnd; Metadata. In addition to the S3 Object content a metadata (key/value pair) can be associated with an object. Here is an example how it can be done: CopyObjectRequest request = new CopyObjectRequest; request.DestinationBucket = BUCKETNAME; request.DestinationKey = S3KEY; request.Directive = S3MetadataDirective.REPLACE; NameValueCollection metadata = new NameValueCollection; // Each user defined metadata must start from 'x-amz-meta-' metadata.Add( 'x-amz-meta-test', 'Test data'); request.AddHeaders(metadata); request.SourceBucket = BUCKETNAME; request.SourceKey = S3KEY; client.CopyObject(request); Amazon S3 does not have a special API call to associate metadata with a S3 object.

Instead of it the copy method should be called. Moreover S3 has an access control that allows limiting users who can access to data (see more about ACL access below). And not only who, but also when. Amazon SDK API allows generating a signed URL that is valid for limited time only. Here is a code that makes URL with validity for a week: GetPreSignedUrlRequest request = new GetPreSignedUrlRequest.WithBucketName(BUCKETNAME).WithKey(S3KEY); request.WithExpires(DateTime.Now.Add( new TimeSpan(7, 0, 0, 0))); string url = client.GetPreSignedURL(request)); And the same can be done through EC2Studio addin: Then you can send the URL to everyone and be sure that the access to your data is stopped for them after the defined time. On talking about hosting a static web content at Amazon S3 it should be mentioned about a log access feature, because you should know who accesses your web site and when.

Is a widely used public cloud storage system. S3 allows an object/file to be up to which is enough for most applications.

The AWS Management Console provides a Web-based interface for users to upload and manage files in S3 buckets. However, uploading a large files that is 100s of GB is not easy using the Web interface. From my experience, it fails frequently. There are various third party commercial tools that claims to help people upload large files to S3 and Amazon also provides a API which is most of these tools based on. While these tools are helpful, they are not free and AWS already provides users a pretty good tool for uploading large files to S3—the from Amazon. From my test, the aws s3 command line tool can achieve more than 7MB/s uploading speed in a shared 100Mbps, which should be good enough for many situations and network environments.

In this post, I will give a on uploading large files to Amazon S3 with the aws command line tool. Install aws CLI tool Assume that you already have Python environment set up on your computer. You can install aws tools using pip or using the bundled installer $ curl '-o 'awscli-bundle.zip' $ unzip awscli-bundle.zip $ sudo./awscli-bundle/install -i /usr/local/aws -b /usr/local/bin/aws.

Try to run aws after installation. If you see output as follows, you should have installed it successfully.

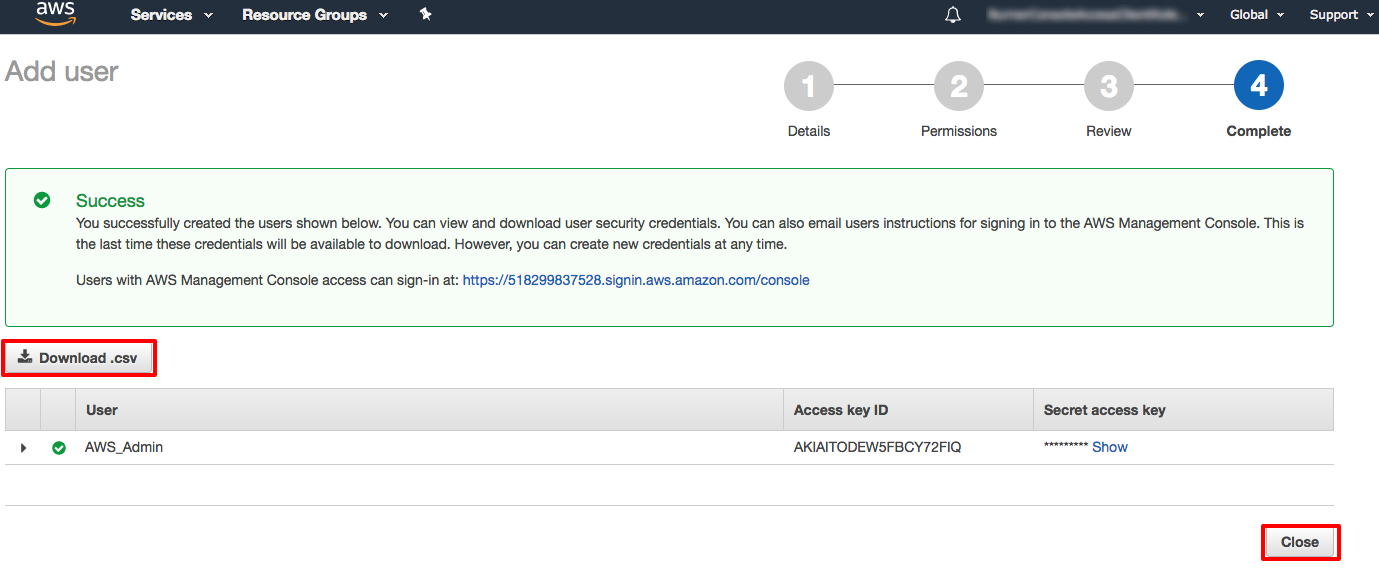

$ aws usage: aws options. parameters To see help text, you can run: aws help aws help aws help aws: error: too few arguments Configure aws tool access The quickest way to configure the AWS CLI is to run the aws configure command: $ aws configure AWS Access Key ID: foo AWS Secret Access Key: bar Default region name us-west-2: us-west-2 Default output format None: json Here, your AWS Access Key ID and AWS Secret Access Key can be found in on the AWS Console. Uploading large files Lastly, the fun comes.

Here, assume we are uploading the large./150GB.data to s3://systut-data-test/storedir/ (that is, directory store-dir under bucket systut-data-test) and the bucket and directory are already created on S3. The command is: $ aws s3 cp./150GB.data s3://systut-data-test/storedir/ After it starts to upload the file, it will print the progress message like Completed 1 part(s) with. File(s) remaining at the beginning, and the progress message as follows when it is reaching the end. Completed 9896 of 9896 part(s) with 1 file(s) remaining After it successfully uploads the file, it will print a message like upload:./150GB.data to s3://systut-data-test/storedir/150GB.data aws has more commands to operate files on S3. I hope this tutorial helps you start with it. Check the for more details.